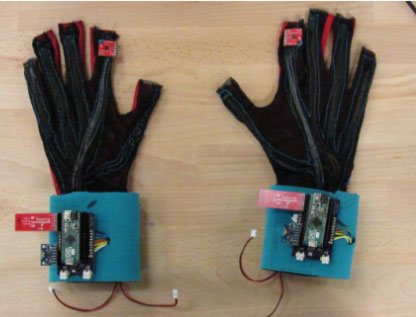

Here is a pair of gloves that can translate sign language into text and speech. Thomas Pryor and Navid Azodi designed SignAloud to recognize hand gestures that correspond to words and phrases in American Sign Language. Theses gloves have sensors to record hand position and movement. Data from these sensors is sent from the gloves to a computer via Bluetooth.

Also see:

The computer looks at the data for gestures through various sequential statistical regressions, similar to a neural network. If the data matches a gesture, then the associated word or phrase is spoken through a speaker.

MIT has more on this story. What do you think? How would improve upon this idea?

[Source]

*Disclaimer*Our articles may contain affiliate links. As an Amazon Associate we earn from qualifying purchases. Please read our disclaimer on how we fund this site.